An introduction to reinforcement learning from human feedback and post-training

Nathan Lambert

Quito, Ecuador

11 March 2026

A cursory overview of RLHF, RLVR, and modern post-training recipes for language models.

What is a language model?

Core properties:

- A language model assigns probabilities to text.

- Chunks of words are broken down as tokens, which are the internal representation of the model.

- Given previous tokens, it predicts the next token. Repeating this produces a completion one step at a time (this is called autoregressive).

What is a (modern) language model?

Modern language models:

- Have billions to trillions of parameters.

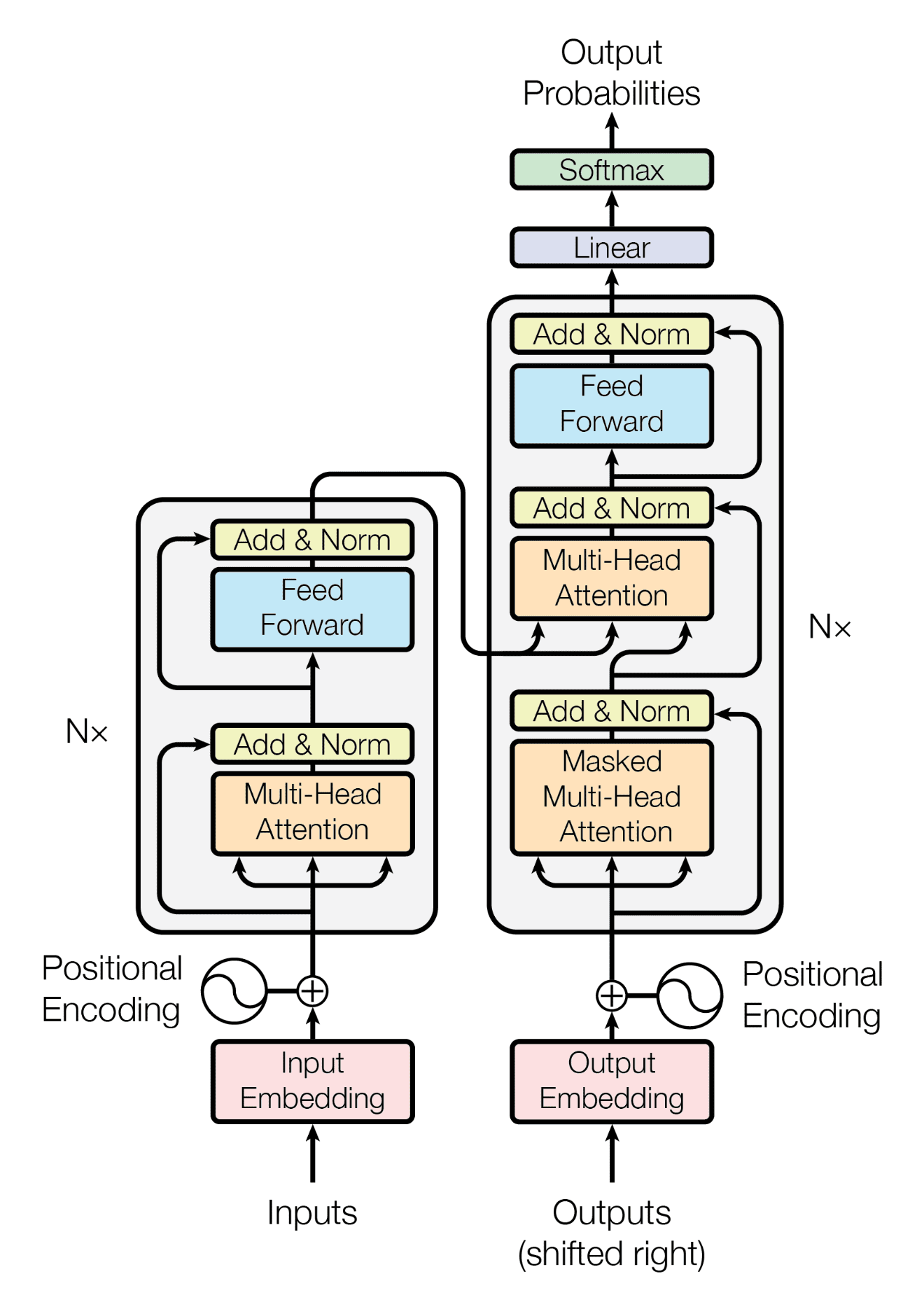

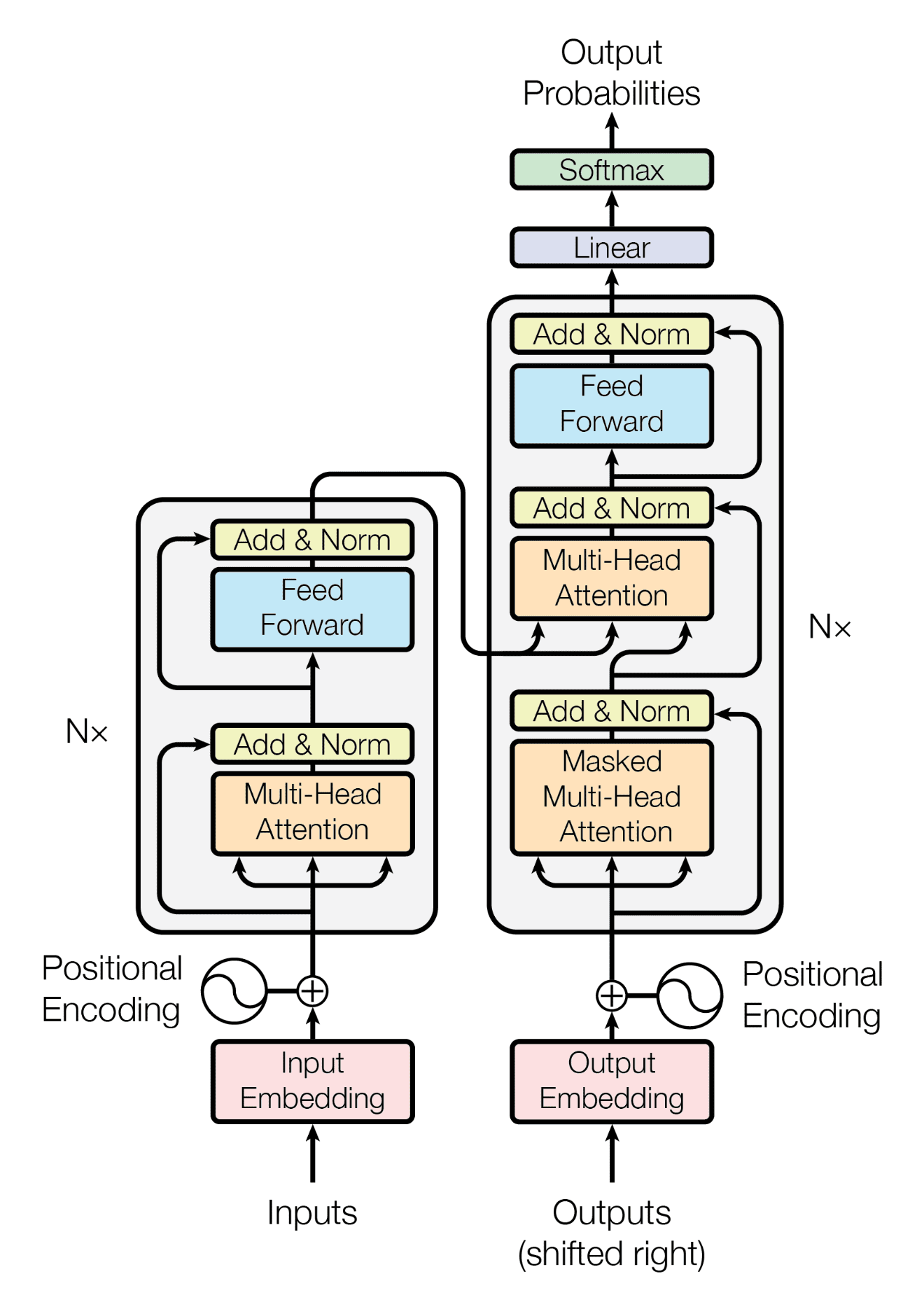

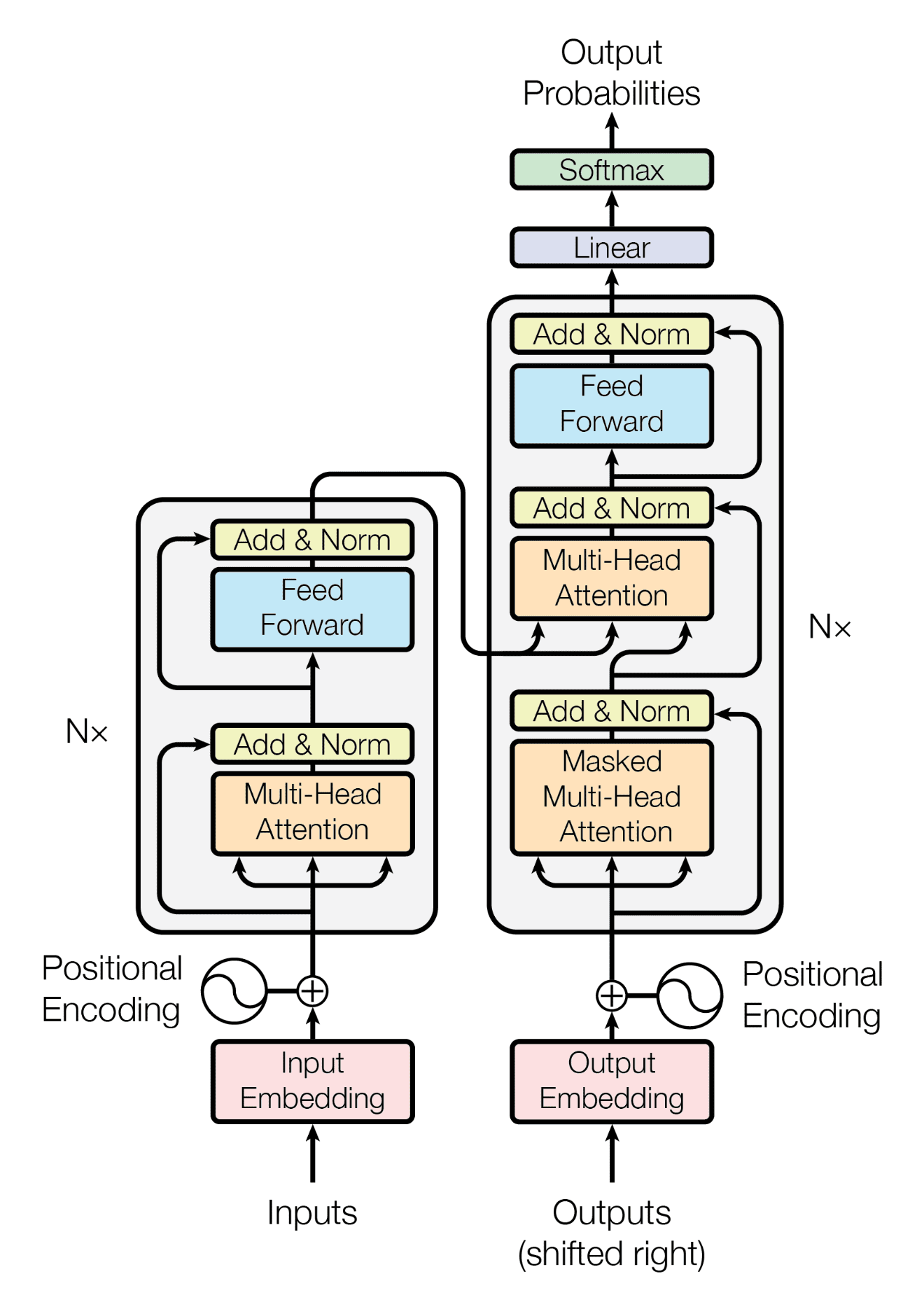

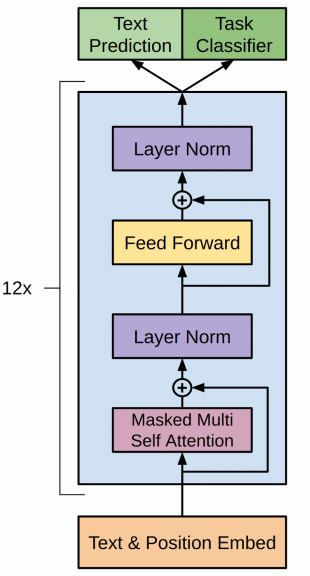

- Largely downstream of the Transformer architecture, which popularized the use of the self-attention mechanism along with fully-dense layers.

- Predict and work over much more than text: Gemini and ChatGPT work with images, audio, and video.

2017: The Transformer is born

- 2017: the Transformer is born

2018: GPT-1, ELMo, and BERT

- 2017: the Transformer is born

- 2018: GPT-1, ELMo, and BERT released

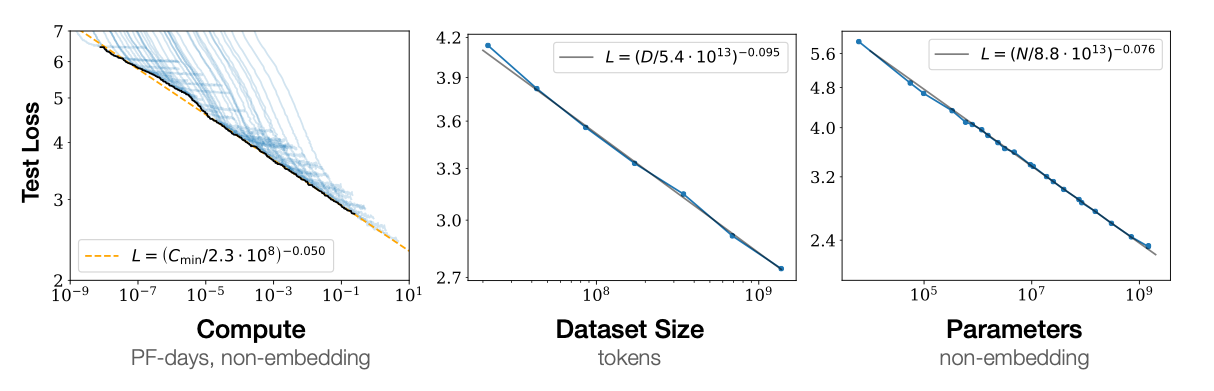

2019: GPT-2 and scaling laws

- 2017: the Transformer is born

- 2018: GPT-1, ELMo, and BERT released

- 2019: GPT-2 and scaling laws

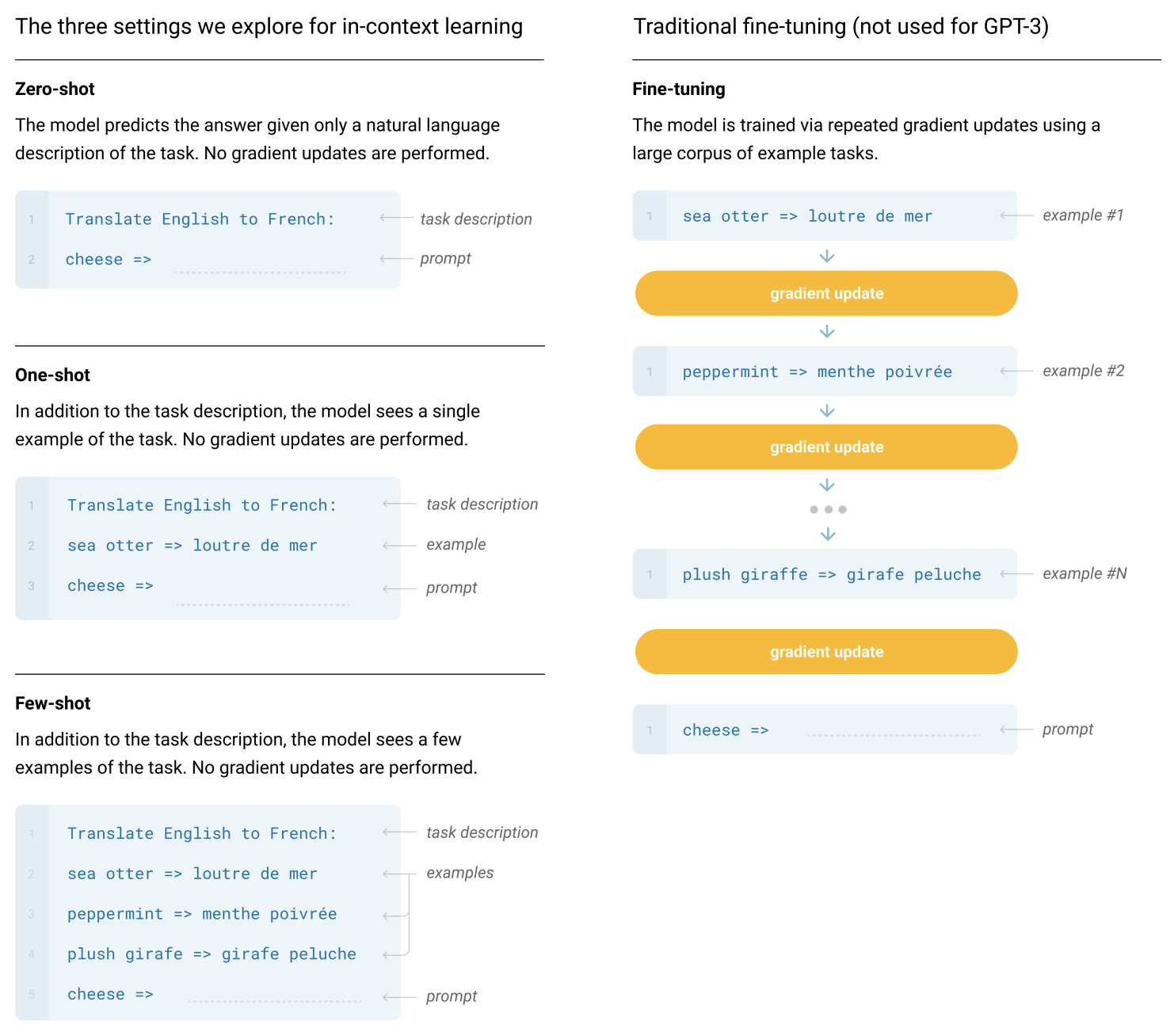

2020: GPT-3 surprising capabilities

- 2017: the Transformer is born

- 2018: GPT-1, ELMo, and BERT released

- 2019: GPT-2 and scaling laws

- 2020: GPT-3 surprising capabilities

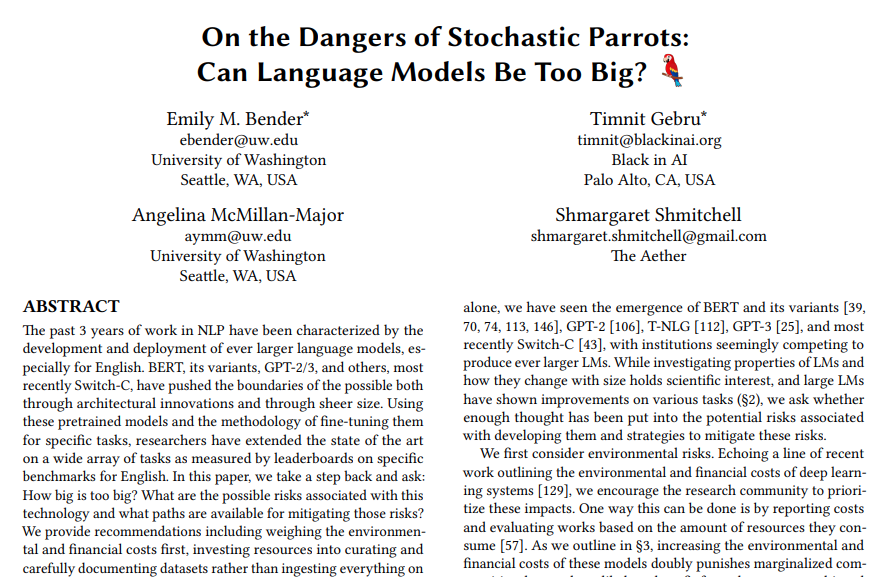

2021: Stochastic Parrots

- 2017: the Transformer is born

- 2018: GPT-1, ELMo, and BERT released

- 2019: GPT-2 and scaling laws

- 2020: GPT-3 surprising capabilities

- 2021: Stochastic Parrots

2022: ChatGPT

- 2017: the Transformer is born

- 2018: GPT-1, ELMo, and BERT released

- 2019: GPT-2 and scaling laws

- 2020: GPT-3 surprising capabilities

- 2021: Stochastic Parrots

- 2022: ChatGPT

2023: GPT-4 and frontier-scale

- 2017: the Transformer is born

- 2018: GPT-1, ELMo, and BERT released

- 2019: GPT-2 and scaling laws

- 2020: GPT-3 surprising capabilities

- 2021: Stochastic Parrots

- 2022: ChatGPT

- 2023: GPT-4 and frontier-scale

2024: o1 and reasoning models

- 2017: the Transformer is born

- 2018: GPT-1, ELMo, and BERT released

- 2019: GPT-2 and scaling laws

- 2020: GPT-3 surprising capabilities

- 2021: Stochastic Parrots

- 2022: ChatGPT

- 2023: GPT-4 and frontier-scale

- 2024: o1 and reasoning models

2025: o3, Claude Code, and agents

- 2017: the Transformer is born

- 2018: GPT-1, ELMo, and BERT released

- 2019: GPT-2 and scaling laws

- 2020: GPT-3 surprising capabilities

- 2021: Stochastic Parrots

- 2022: ChatGPT

- 2023: GPT-4 and frontier-scale

- 2024: o1 and reasoning models

- 2025: o3, Claude Code, and agents

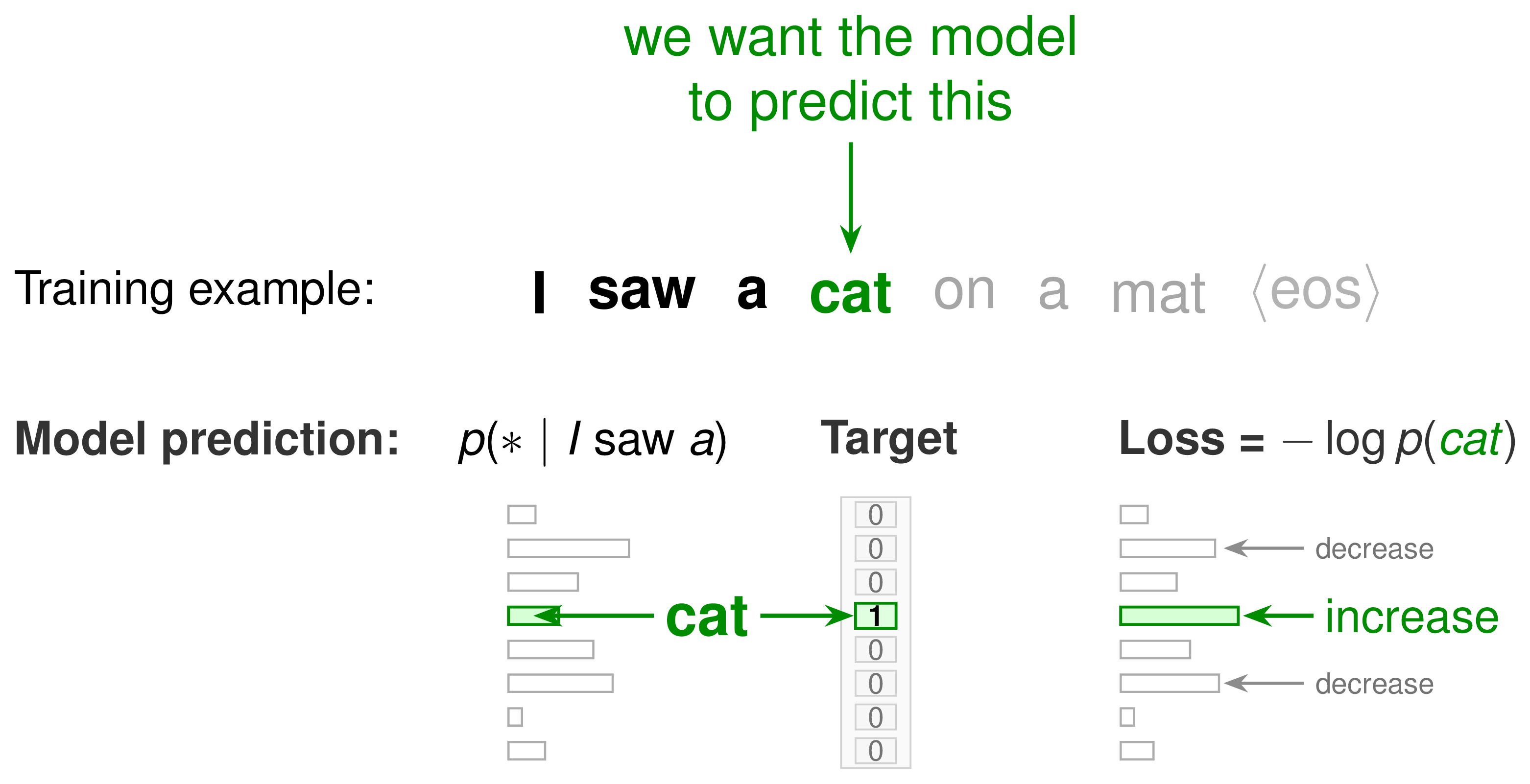

Pretraining: next-token prediction

- Train on trillions of tokens of text from the web, books, code, and documents

- Models are often trained on 5-50+ trillion tokens

- 1T of text tokens is about 3-5 TB of data

- Labs gather and filter 10-20X more data than is used for the model

- Total data funnel targeted for models is on the order of petabytes

- Objective: predict the next token in each sequence

- Result: Incredible, flexible, useful models

A base model completes text

After pretraining we are left with a glorified autocomplete model, for example:1

The president of the United States in 2006 was

George W. Bush, the governor of Florida in 2006 was Jeb Bush, and John McCain was an Arizona senator in 2006...

Post-training makes it answer like a chatbot

The earliest forms of modern post-trained (or RLHF-tuned) models shifted the continuation format to always conforming to the “answering a question style.” An example of what early conversational models looked like is below:

The president of the United States in 2006 was

George W. Bush was the president of the United States in 2006. He served two terms in office, from January 20, 2001, to January 20, 2009.

ChatGPT was when RLHF made the models even easier to use

Model responses evolved quickly to have:

- Better format: direct, conversational answers

- Better style: helpful, concise, markdown, etc.

- Better product: people could actually use it every day

I'm giving a talk on RLHF tomorrow. Can you help me structure it?

Absolutely — here's a simple structure:

1. Start with the basics

- What a language model is

- Why next-token prediction is not enough

2. Explain post-training

- Instruction tuning

- Preference tuning / RLHF

- RLVR for verifiable tasks

3. End with why it matters

- Better products like ChatGPT

- Better reasoning in models like o1 ...

So what is reinforcement learning from human feedback (RLHF) anyways?

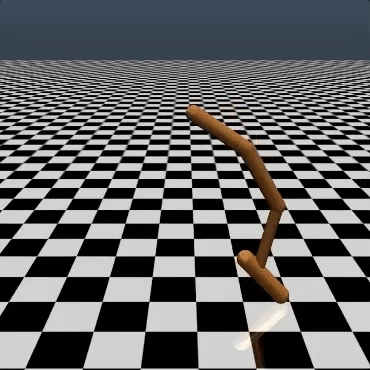

Which is the better backflip?

Why did people make RLHF?

- Many objectives are easy for humans to judge, but hard to write as an exact reward function

- In language models, what we want is often implicit: follow intent, be helpful, be harmless

- Pretraining optimizes next-token prediction, not assistant behavior

- Preference comparisons turn those human judgments into a scalable training signal

RLHF lets us optimize for behavior we can evaluate, even when we cannot easily specify the reward.

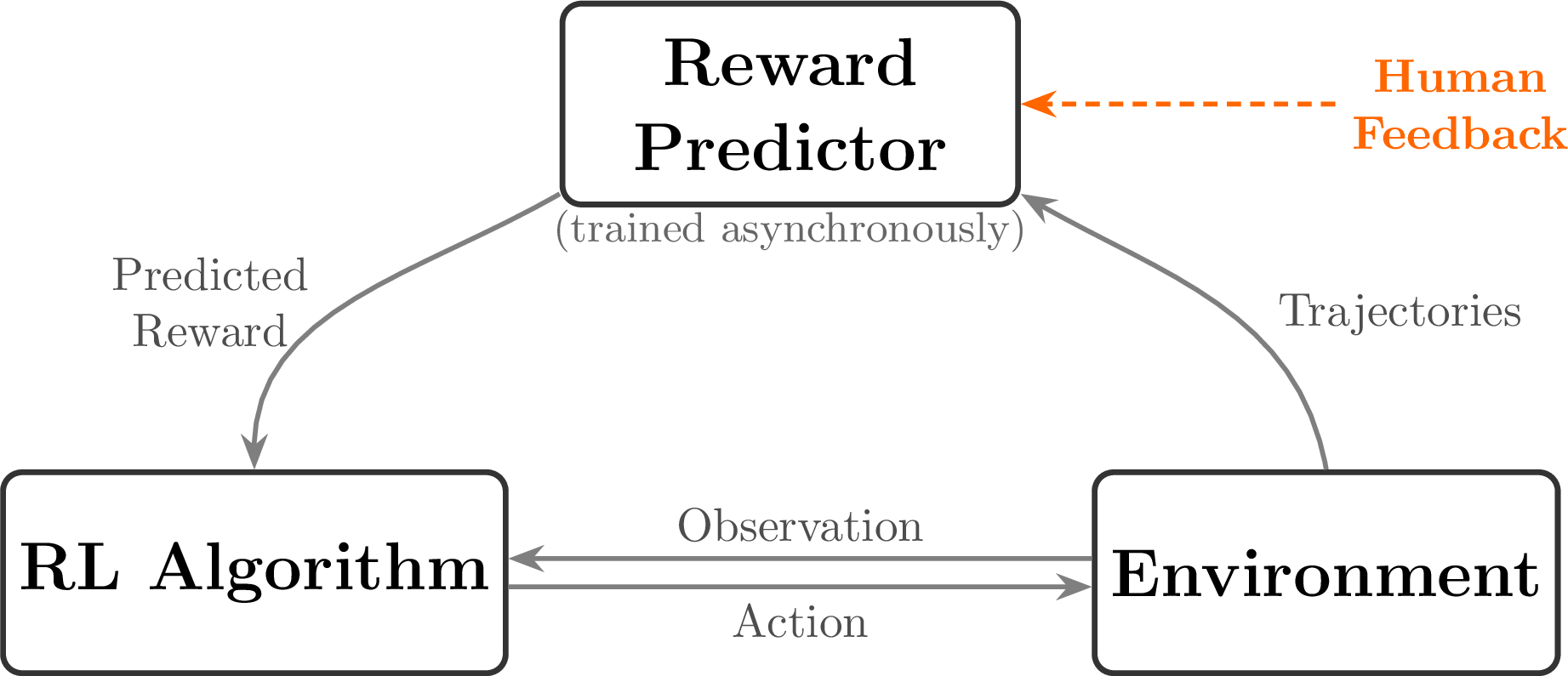

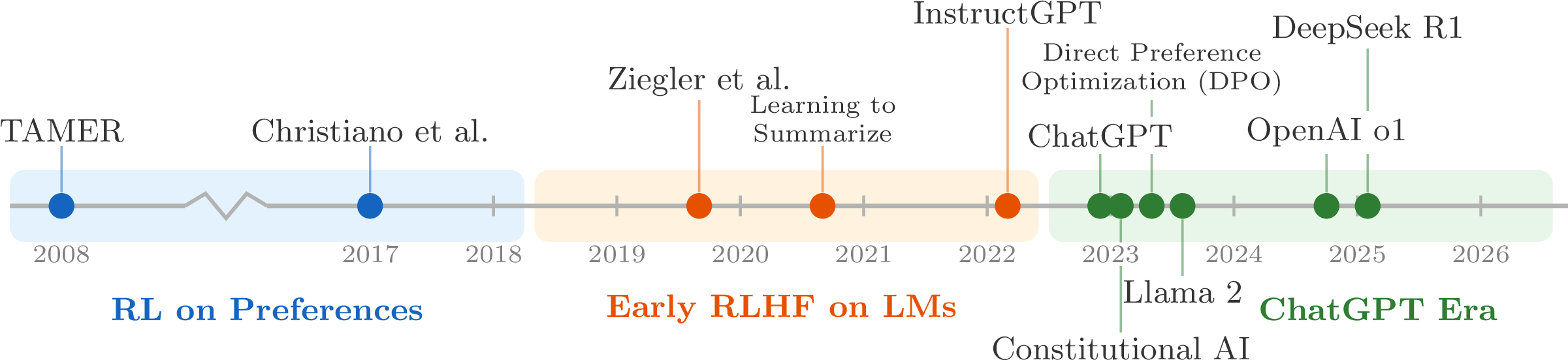

RLHF before language models

- TAMER (Knox & Stone, 2008) — humans score agent actions to learn a reward

- Christiano et al. 2017 — RLHF on Atari trajectory preferences

- Ziegler et al. 2019 — first RLHF on language models

RLHF for language models: compare two completions

Explain the moon landing to a 6-year-old.

The Apollo program culminated in a successful lunar landing in 1969. Astronauts used a spacecraft to descend to the moon's surface and collect samples before returning to Earth.

Explain the moon landing to a 6-year-old.

People built a special rocket to go to the moon. Two astronauts landed there, walked around, and came home safely to tell everyone what they saw.

Left: Human feedback; Right: Hand-designed reward function

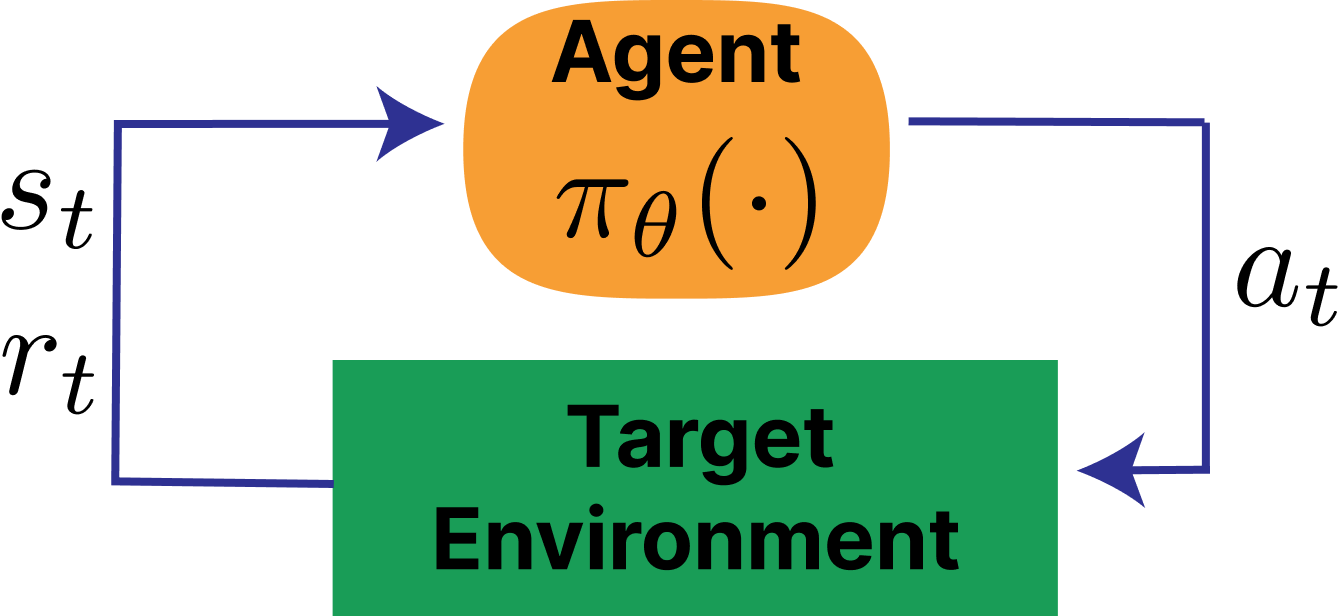

Classical RL

A reinforcement learning problem is often written as a Markov Decision Process (MDP):

- state space \mathcal{S}, action space \mathcal{A}

- transition dynamics P(s_{t+1}\mid s_t, a_t)

- reward function r(s_t, a_t) and discount \gamma

- optimize cumulative return over a trajectory

Classical RL vs. RLHF

Classical RL

- Agent takes actions a_t in an environment with states s_t

- Reward is a known function r(s_t, a_t) from the environment per step

- Optimize cumulative return over a trajectory (total steps T)

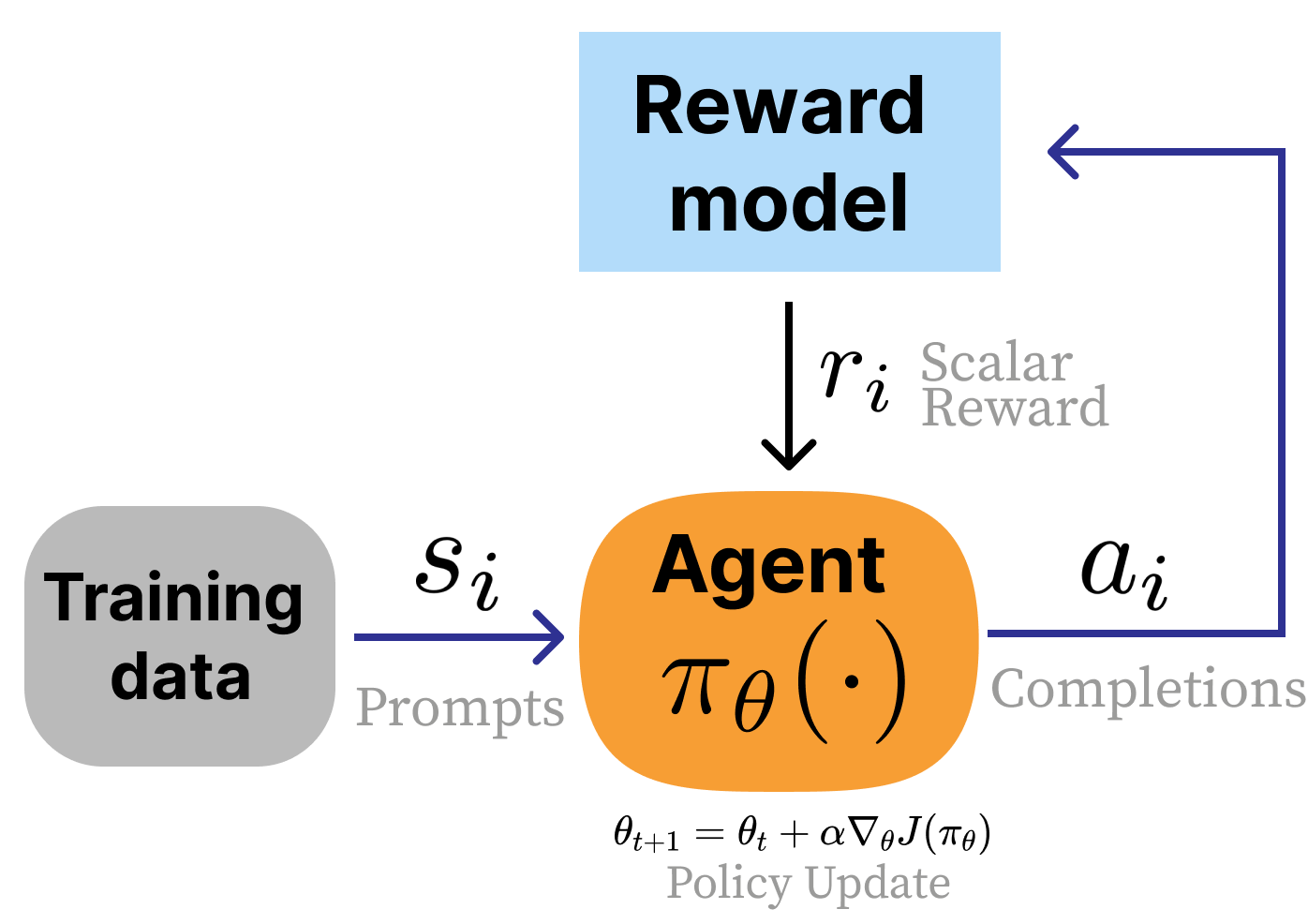

RLHF

- No environment — prompts sampled from a dataset

- Reward is learned from human preferences (a proxy)

- Response-level reward (bandit-style, not per-token)

- Regularized with KL penalty to stay close to the base model

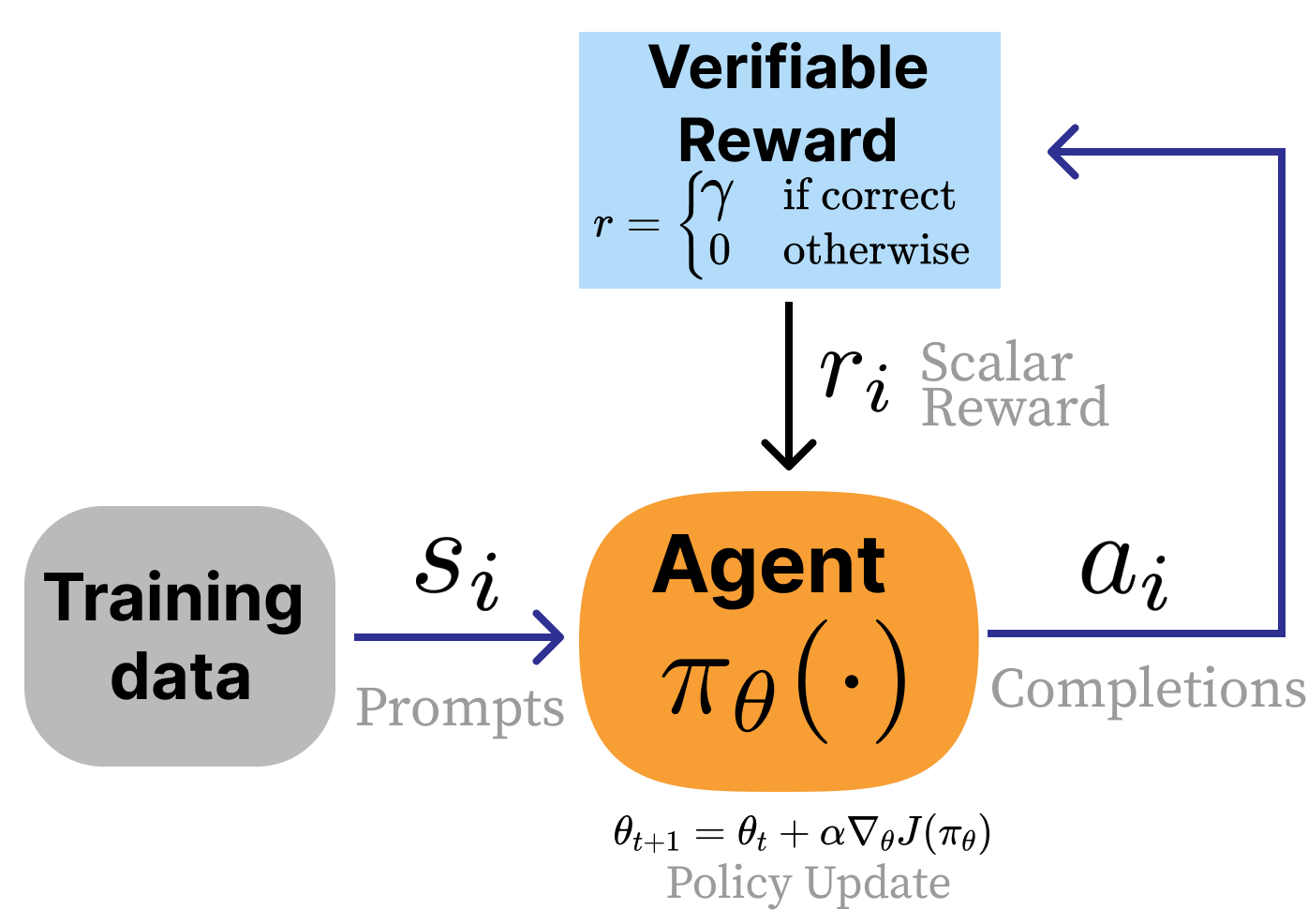

Reinforcement learning with Verifiable rewards

Apply the same RL algorithms to LLMs when the answer can be checked directly. No need to train a reward model:

- E.g. Math: check the final answer.

Code: run the tests. - No learned reward model — no proxy objective

- Enables scaling RL compute on reasoning tasks

- Unlocked inference time scaling: Spending more compute at generation time per problem increases performance log-linearly w.r.t. compute

- RLVR was named by Tülu 3 (Lambert et al., 2024) and popularized by DeepSeek R1 (Guo et al., 2025)

Comparing classical RL vs. LLM RLHF and RLVR

| Classical RL | RLHF | RLVR | |

|---|---|---|---|

| Reward | Environment | Learned (proxy) | Verifiable (exact) |

| State transitions | Yes | No | No |

| Reward granularity | Per-step | Per-response | Per-response |

| Primary challenge | Explore-Exploit Trade-off | Over-optimization | Task generalization |

| Example | CartPole | Chat style tuning | Math reasoning |

The path to modern RLHF

- Ziegler 2019 (Ziegler et al., 2019) — first RLHF on language models

- InstructGPT (Ouyang et al., 2022) — the canonical RLHF recipe behind ChatGPT

- Constitutional AI (Bai et al., 2022) — Introduced early methods for AI feedback in Claude

- DPO (Rafailov et al., 2023) — direct preference optimization (DPO) without a reward model

- Llama 3 (Grattafiori et al., 2024) and Tülu 3 (Lambert et al., 2024) — modern multi-stage recipes

- DeepSeek R1 (Guo et al., 2025) — popularized RLVR

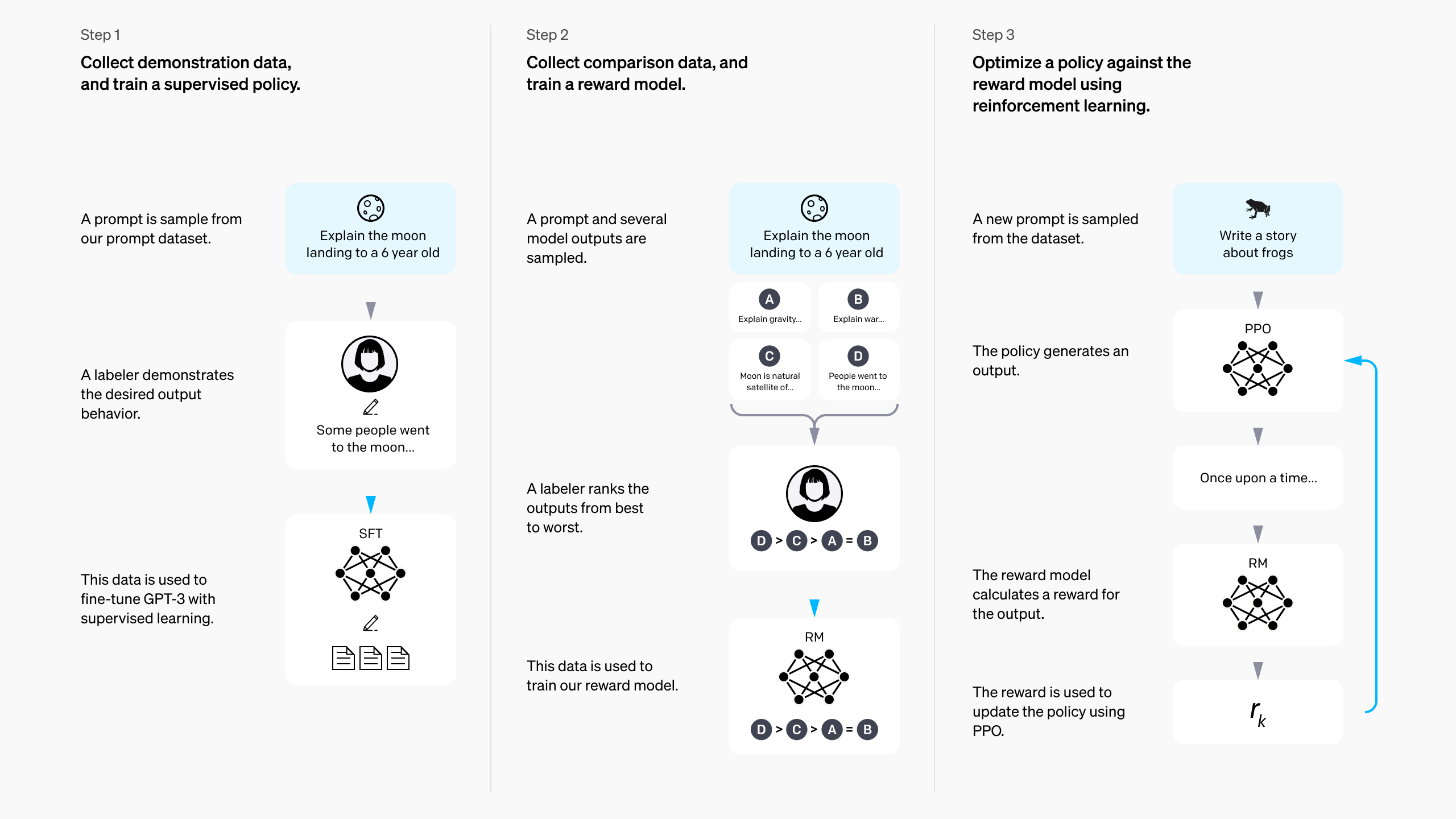

InstructGPT’s 3-step RLHF recipe

Step 1/3: Instruction fine-tuning (IFT)

The foundation of post-training. Also called Supervised Fine-tuning (SFT):

- Start from a pretrained language model

- Collect demonstrations of desired assistant behavior

- Train with standard supervised learning on prompt-response pairs.

(different batch size, learning rate, etc.) - Model can now answer questions.

Easy to use IFT to quickly adapt base model to many domains.

You are a helpful, harmless assistant. A system message like this can be used to steer the model to specific personas or behaviors.

Write me a short poem about an optimistic goldfish.

Bright little goldfish Finds a sunrise in each wave Small bowl, endless hope

Step 2/3: Reward modeling

Overview:

- Collect comparisons between two model outputs for the same prompt

- RLHF gets its name from collecting human feedback between completions, but today much of it is AI feedback

- Train a reward model r_\phi(x, y) to score preferred completions higher

The probability model says a response should win when it gets a higher reward score:

Training then minimizes the negative log-likelihood of the preferred response beating the rejected one:

Notation:

- x is the prompt

- y_w is the winning response

- y_l is the losing response

- r_\phi(x, y) is the trained reward model

Step 2/3: Reward modeling

The reward used in RLHF is the model predicting the probability that a given piece of text would be the "winning" or "chosen" completion in a pair/batch. Clever!

The probability model says a response should win when it gets a higher reward score:

Training then minimizes the negative log-likelihood of the preferred response beating the rejected one:

Notation:

- x is the prompt

- y_w is the winning response

- y_l is the losing response

- r_\phi(x, y) is the trained reward model

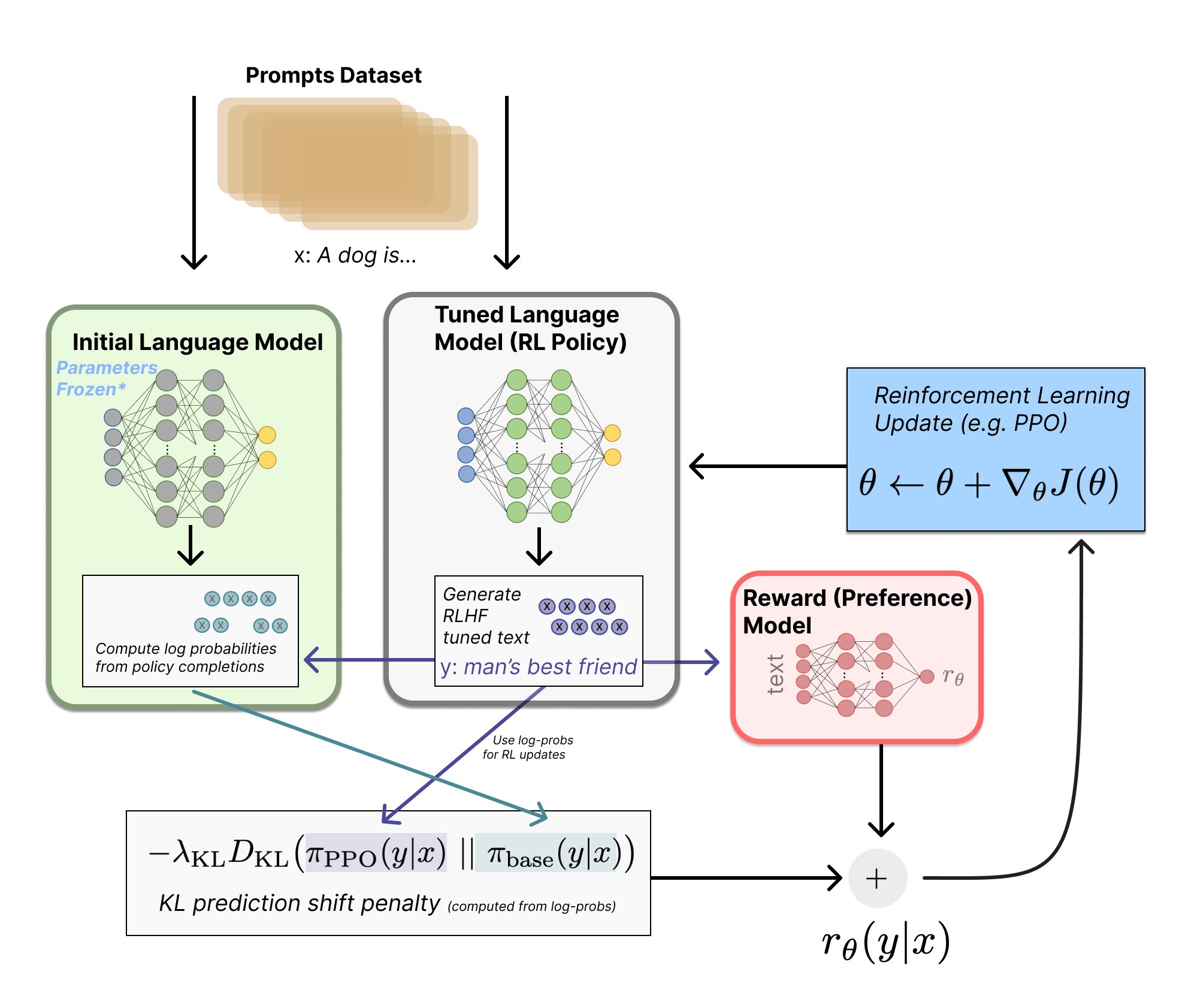

Step 3/3: RL against the reward model

Where everything comes together (and RLHF gets its name):

- Sample a batch of prompts x_i from the dataset \mathcal{D}

- Generate completions y_i \sim \pi_\theta(\cdot \mid x_i) from the model being trained

- Score them with the reward model r_\phi(x_i, y_i)

- Add a KL penalty so the policy stays close to the SFT/reference model.1

- Update the policy with a policy-gradient RL algorithm (Proximal Policy Optimization, PPO in InstructGPT & ChatGPT)

The RLHF objective, unpacked

The reference model \pi_{\mathrm{ref}} keeps the policy anchored to the SFT model.

D_{\mathrm{KL}}\!\left(\pi(\cdot \mid x)\,\|\,\pi_{\mathrm{ref}}(\cdot \mid x)\right) measures how far the new policy moves from that reference on prompt x.

\beta controls the tradeoff between improving behavior and staying close to what the model already knows.

What if we optimize this more directly?

Direct Preference Optimization (DPO)

- Derived the gradient toward the optimal solution, \pi^*, to the above equation

- Eliminated the need for a separate reward model (via training an implicit one)

- Train directly on preferred (y_w) vs. rejected (y_l) responses to a prompt (x)

What if we optimize this more directly?

Direct Preference Optimization (DPO)

- Derived the gradient toward the optimal solution, \pi^*, to the above equation

- Eliminated the need for a separate reward model (via training an implicit one)

- Train directly on preferred (y_w) vs. rejected (y_l) responses to a prompt (x)

- Far simpler to implement

- Far cheaper to run

- Achieves ~80% or more of the final performance

- I used it to build models like Zephyr-Beta, Tülu 2/3, Olmo 2/3, etc.

How training recipes have evolved

| InstructGPT (2022) | Tülu 3 (2024) | DeepSeek R1 (2025) | |

|---|---|---|---|

| Instruction data | ~10K | ~1M | 100K+ |

| Preference data | ~100K | ~1M | On-policy |

| RL stage | ~100K prompts | ~10K (RLVR) | N/A |

An overall trend is to use far more compute across all the stages, but shifting more to RLVR.

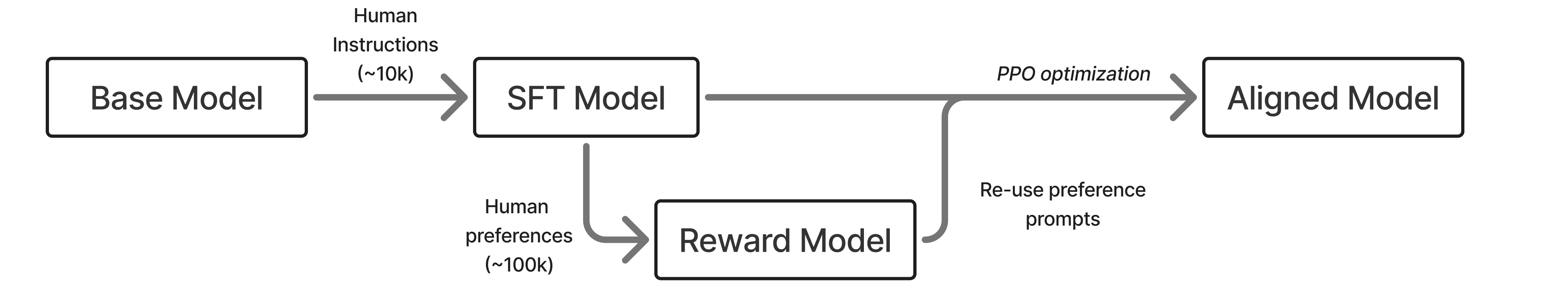

The early days: InstructGPT

Early on, RLHF had a well-documented, simple enough approach.

- InstructGPT made the classic three-stage recipe canonical: SFT, reward modeling, then RL against the reward model. OpenAI even hinted that the original ChatGPT used this!

- This became the intellectual template for much of modern post-training.

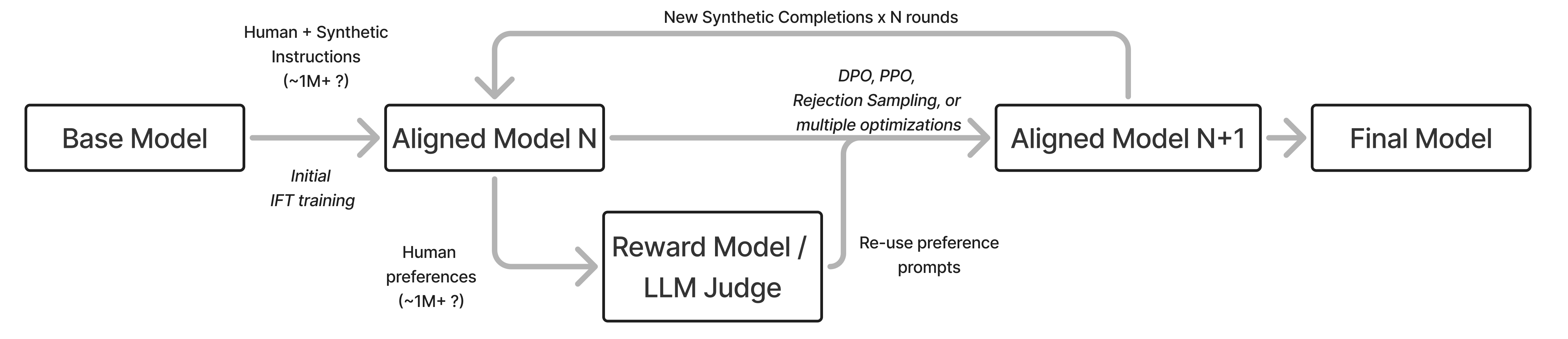

From RLHF to post-training

What began as an “RLHF” recipe evolved into a complex series of steps to get the final, best model (e.g. Nemotron 4 340B, Llama 3.1).

- Modern systems keep the same core idea of using multiple optimizers with different strengths and weaknesses, but add more stages, more data, and more filtering.

- This trend has only continued, and recipes ebb and flow, as tools like RLVR and model merging change the scope of what is doable in different ways.

From RLHF to “post-training”

As time has passed since ChatGPT, the field has gone through multiple distinct phases (roughly):

- 2023: Simple SFT for better chatbots and reproducing RLHF fundamentals (Alpaca, Vicuna, etc.)

- 2024: DPO dominates open models and training stages expand (Zephyr-beta, Tülu 2, etc.)

- 2025: RLVR, complex recipes (Tülu 3, Olmo 3, Nemotron 3, R1, etc.)

- 2026: Agentic training, multi-turn RL, etc.

From RLHF to “post-training”

As time has passed since ChatGPT, the field has gone through multiple distinct phases (roughly):

- 2023: Simple SFT for better chatbots and reproducing RLHF fundamentals (Alpaca, Vicuna, etc.)

- 2024: DPO dominates open models and training stages expand (Zephyr-beta, Tülu 2, etc.)

- 2025: RLVR, complex recipes (Tülu 3, Olmo 3, Nemotron 3, R1, etc.)

- 2026: Agentic training, multi-turn RL, etc.

Within 2024 the field shifted its focus to post-training, as training stages evolved beyond the InstructGPT-style recipe, DPO proliferated, and largely RLHF was viewed as one tool (that you may not even need).

An intuition for post-training

RLHF’s reputation was that its contributions are minor on the final language models.

“A model’s knowledge and capabilities are learnt almost entirely during pretraining, while alignment teaches it which subdistribution of formats should be used when interacting with users.”

LIMA: Less Is More for Alignment (2023)

An intuition for post-training

RLHF’s reputation was that its contributions are minor on the final language models.

“A model’s knowledge and capabilities are learnt almost entirely during pretraining, while alignment teaches it which subdistribution of formats should be used when interacting with users.”

LIMA: Less Is More for Alignment (2023)

Sometimes this view of alignment (or RLHF) teaching “format” made people think that post-training only made minor changes to the model. This would describe finetuning as “just style transfer.”

The base model trained on trillions of tokens of web text has seen and learned from an extremely broad set of examples. The model at this stage contains far more latent capability than early post-training recipes were able to expose.

The question is: How does post-training interact with these?

An intuition for post-training

RLHF’s reputation was that its contributions are minor on the final language models.

An example, OLMoE — same base model family, updated only post-training:

OLMoE-1B-7B-0924-Instruct(Sep. 2024): 38.44 avg. eval scoreOLMoE-1B-7B-0125-Instruct(Jan. 2025): 45.62 avg. eval score

Base models determine the ceiling. Post-training’s job has been to reach it.

An intuition for post-training

RLHF’s reputation was that its contributions are minor on the final language models.

“A model’s knowledge and capabilities are learnt almost entirely during pretraining, while alignment teaches it which subdistribution of formats should be used when interacting with users.”

LIMA: Less Is More for Alignment (2023)

“The superficial alignment hypothesis (SAH) posits that large language models learn most of their knowledge during pre-training, and that post-training merely surfaces this knowledge.”

Operationalising the Superficial Alignment Hypothesis via Task Complexity (2026)

The second paper, 3 years later, matches my intuition for post-training.

Beyond elicitation: The scaling RL era of post-training

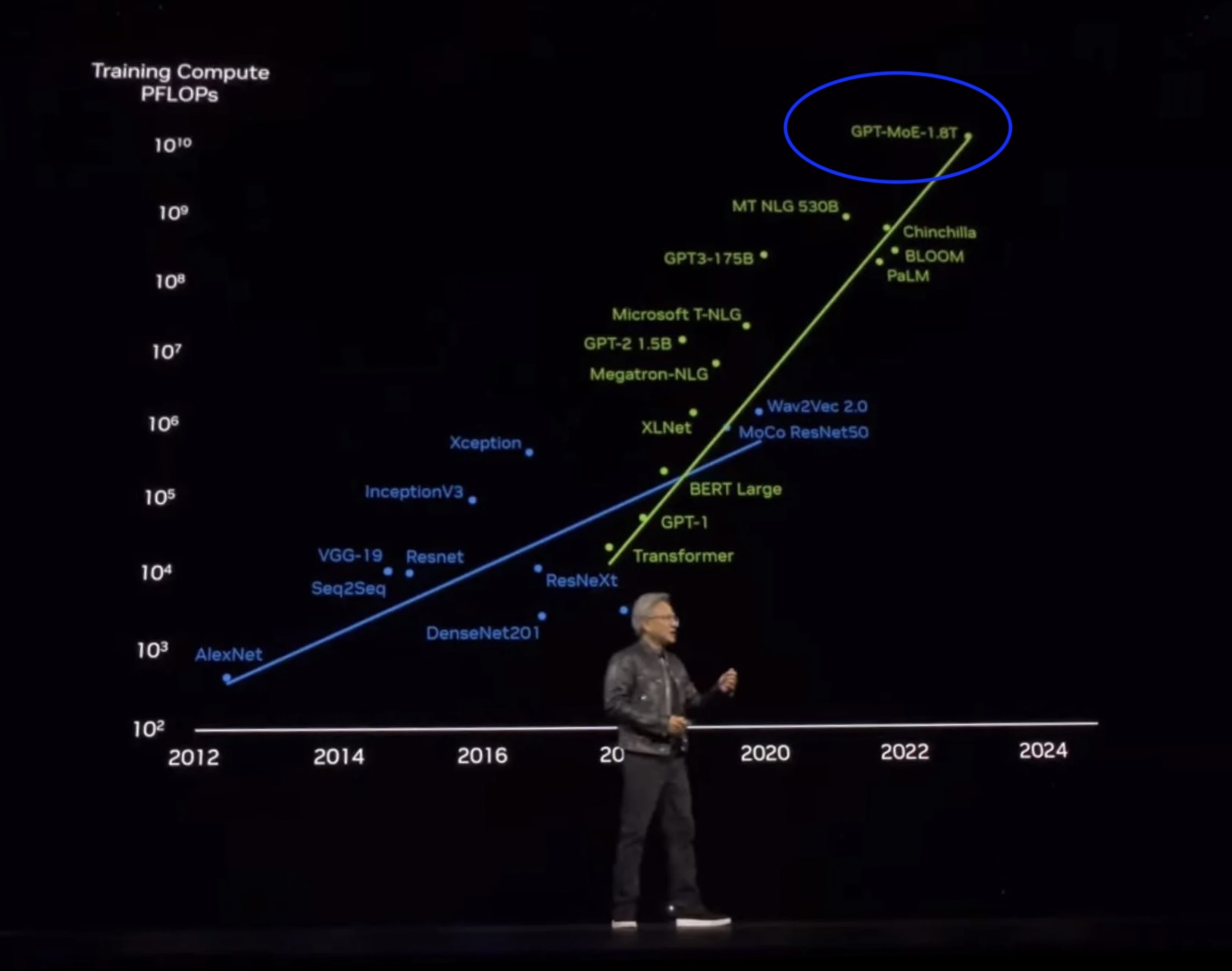

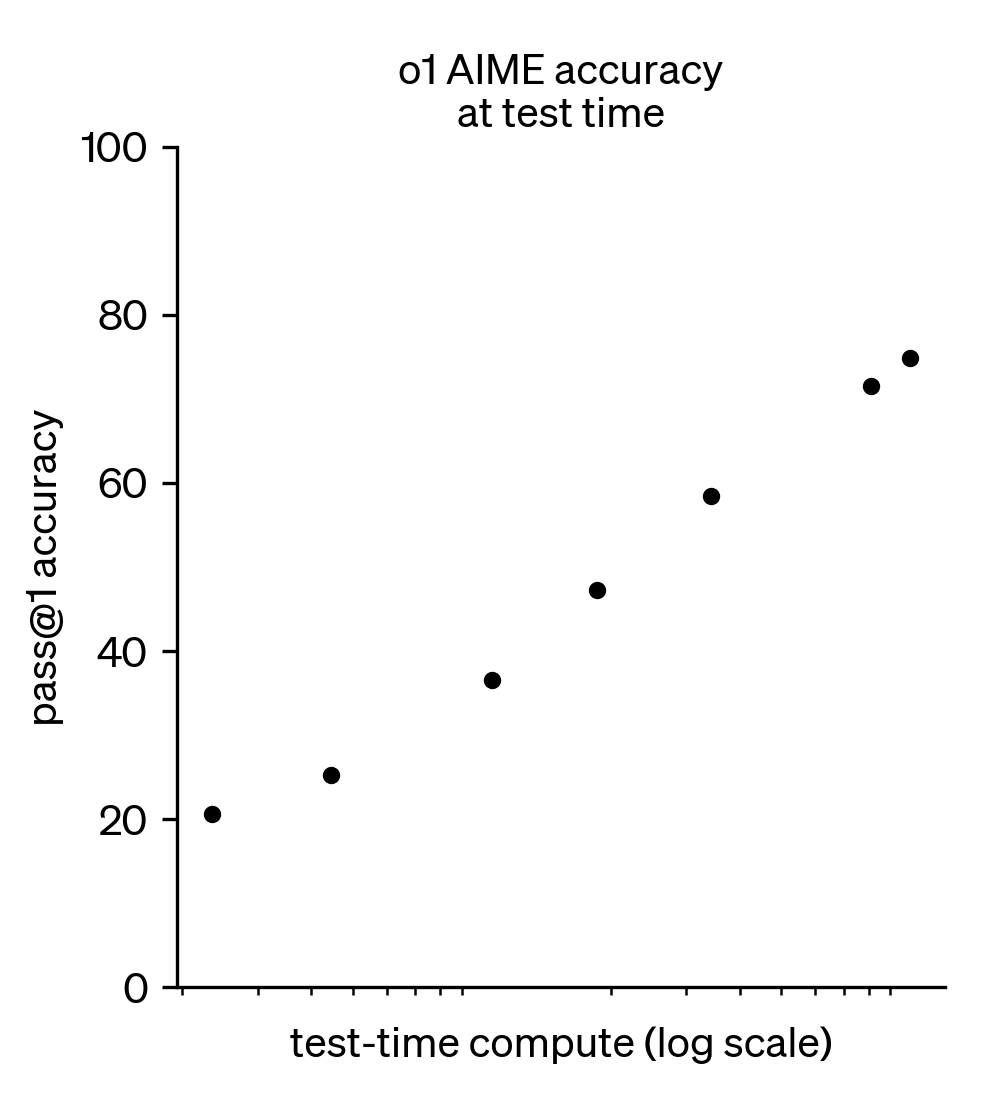

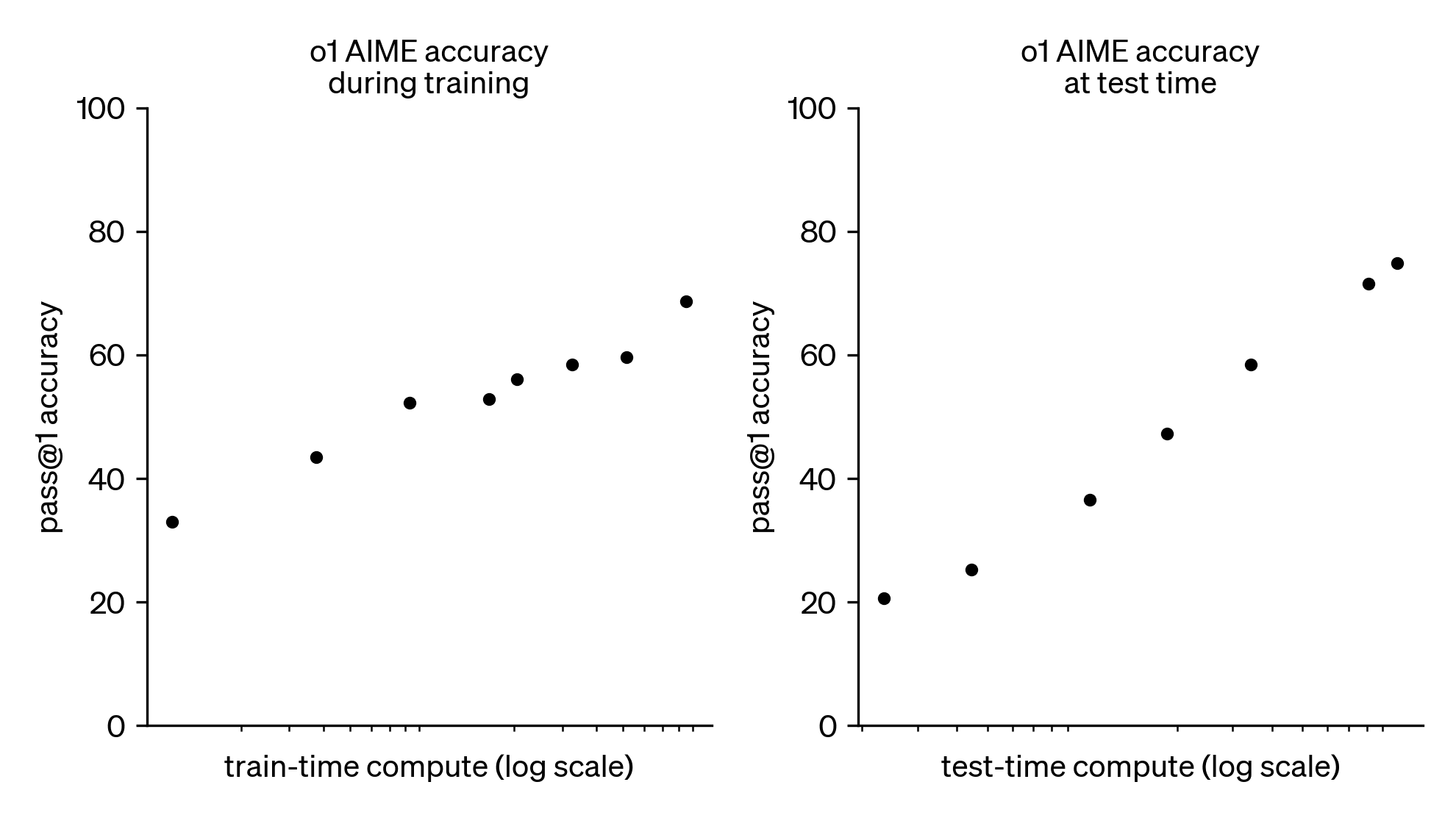

OpenAI’s seminal scaling plot with o1-preview

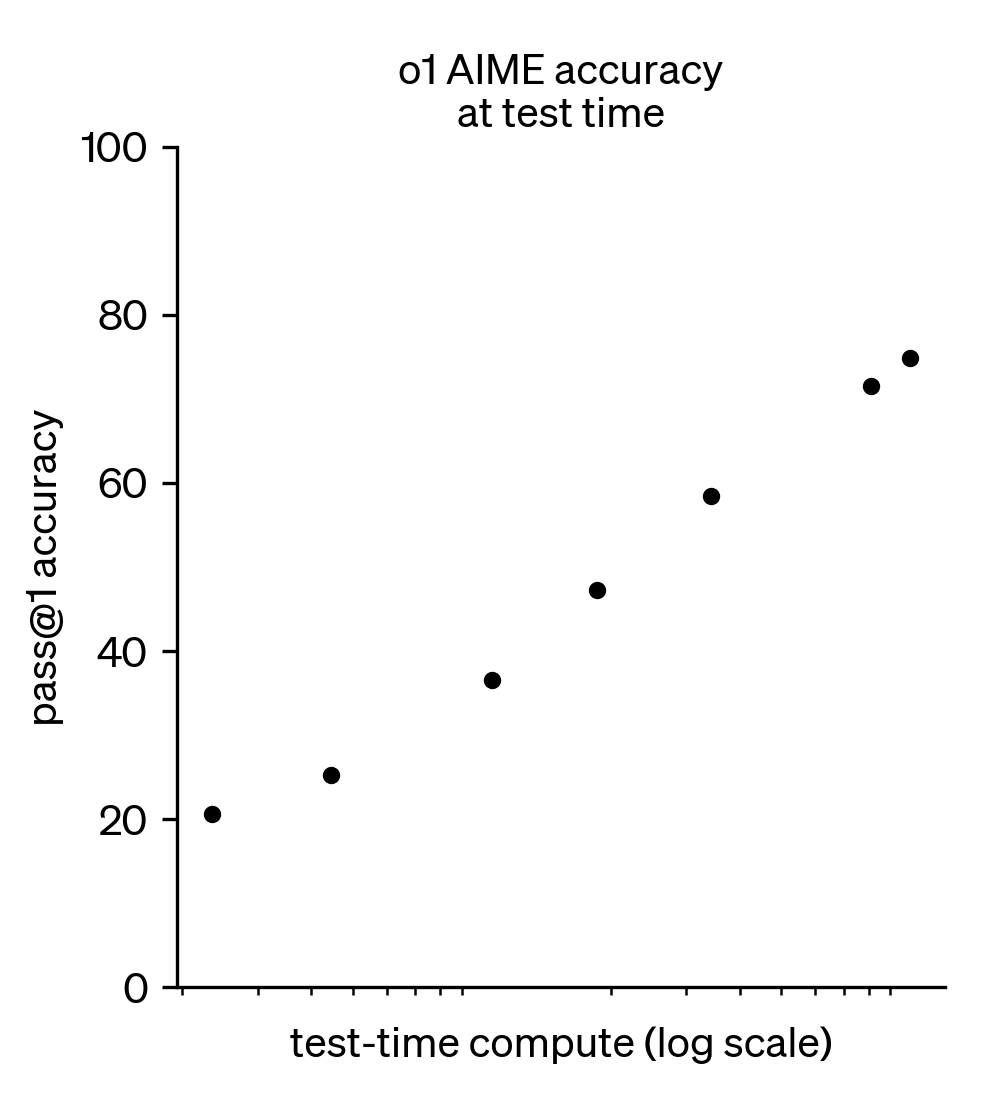

o1: Test-time scaling

A log-linear relationship between inference compute (number of tokens generated) and downstream performance.

- This is a fundamental property of models, unlocked in its popular form with RLVR

- Can be done in many ways: One long chain of thought (CoT) sequence, multiple agents in parallel, or mixes of the two

- Improving inference-time scaling changes the slope and offset of the curve

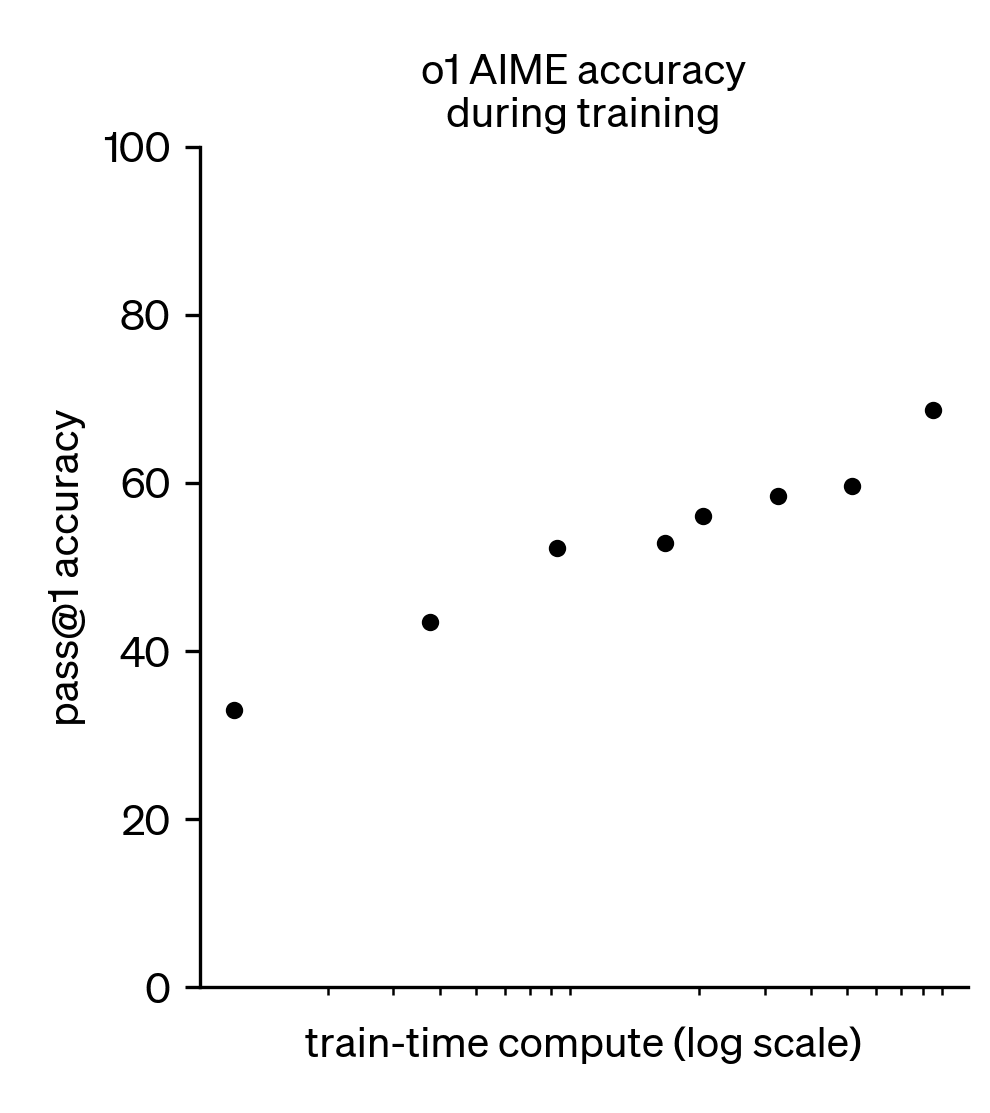

o1: Training-time scaling (with reinforcement learning!)

An often underplayed portion of the o1 release (and future reasoning/agentic models).

- Scaling reinforcement learning compute also has a log-linear return on performance!

- The core question: Is scaling RL training just eliciting more from the base model or actually teaching new abilities?

Results in a two-sided scaling landscape for training language models – both pretraining and post-training. The third place of scaling is at inference (no weight updates there).

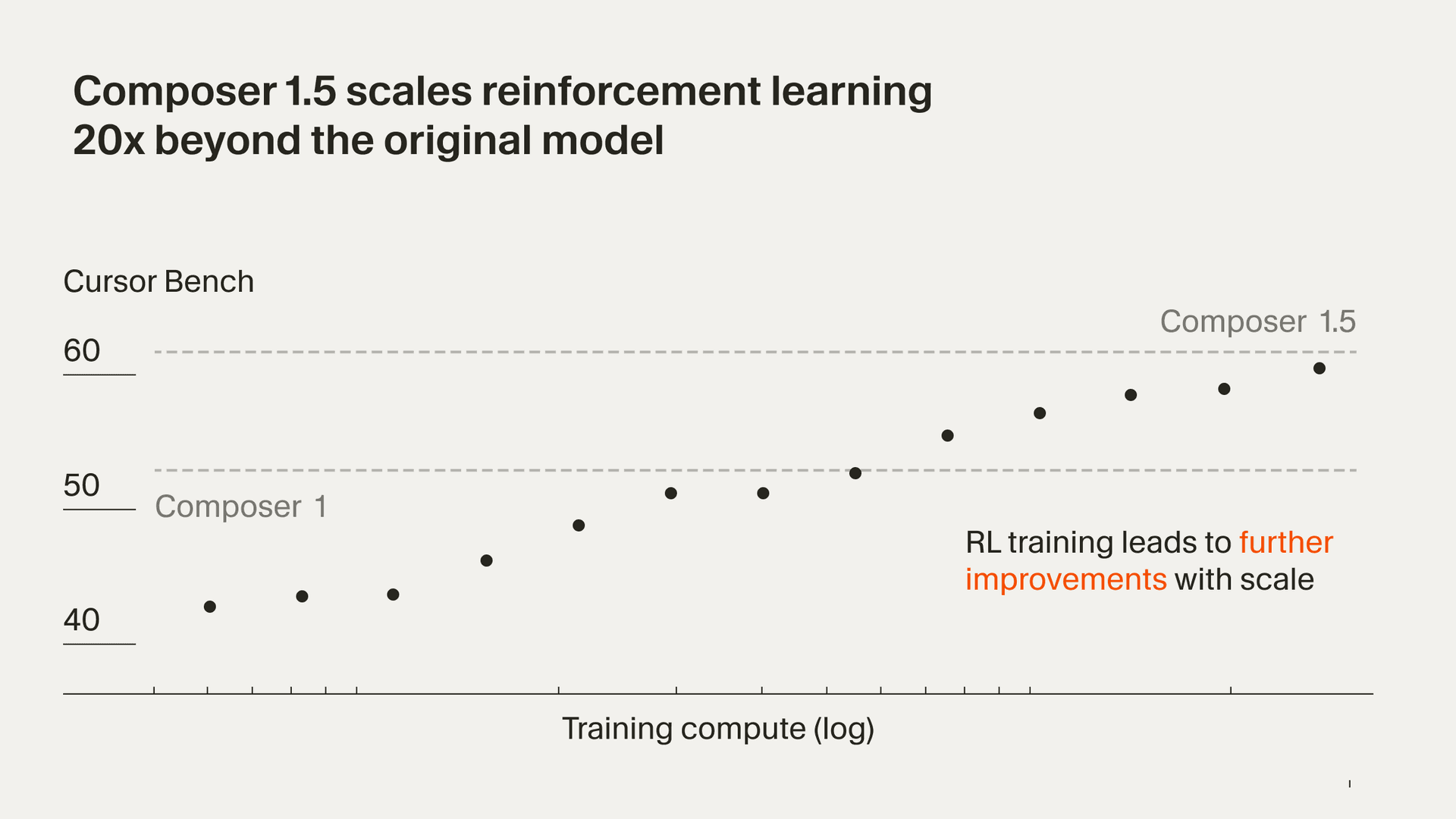

Cursor Composer 1.5: RL scaling

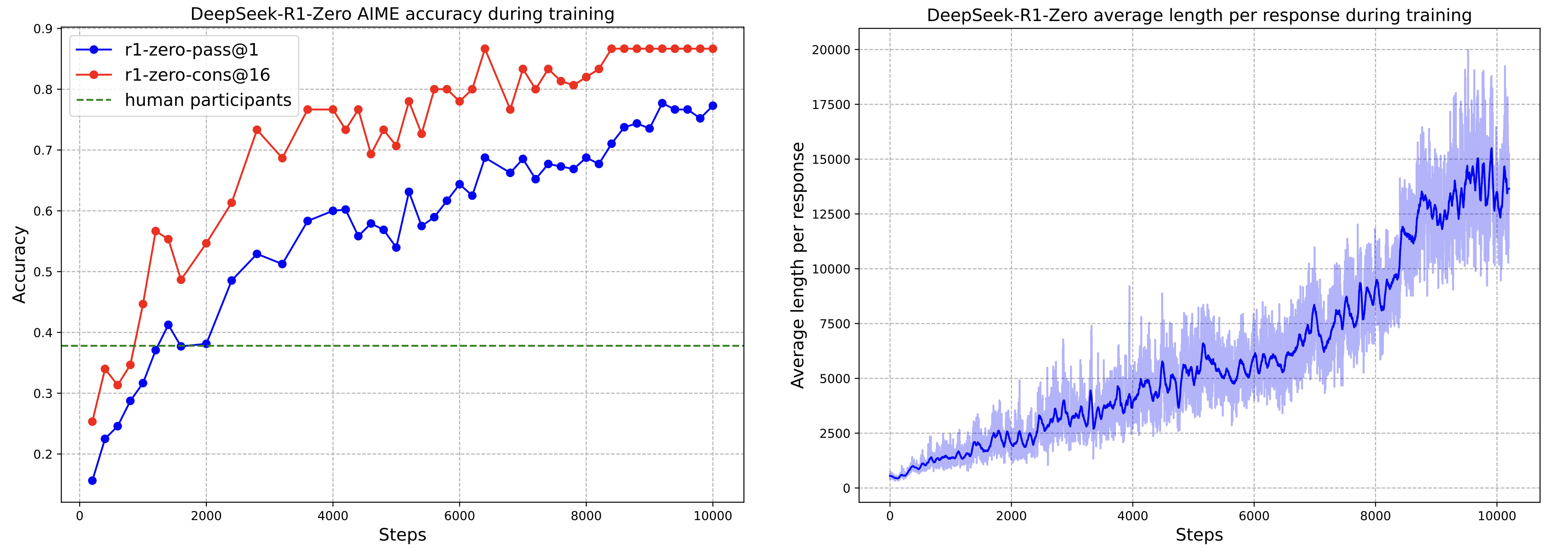

DeepSeek-R1-Zero: RL scaling

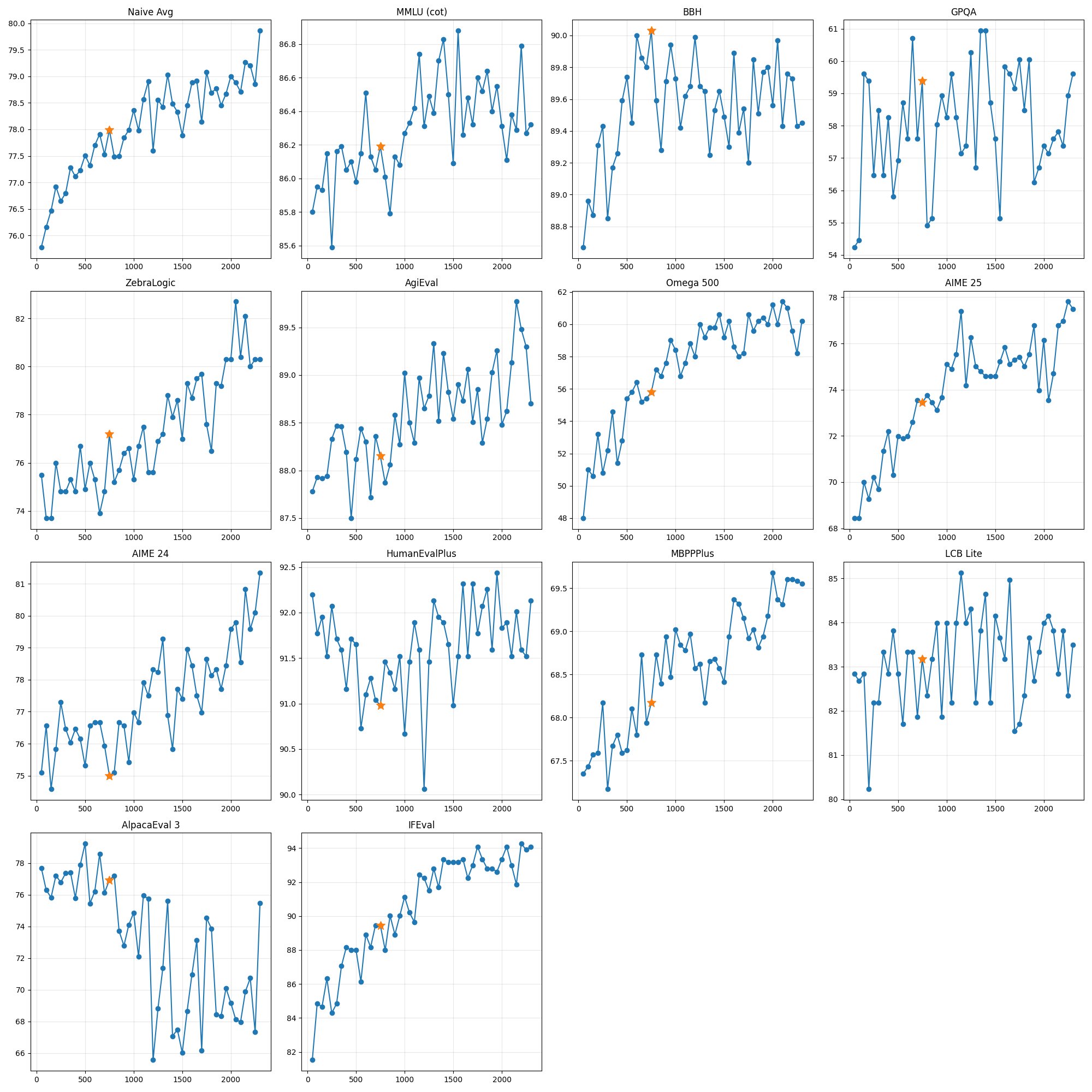

Olmo 3.1: extending the RL run

One of the few “fully open” large-scale RL runs to date.

- Training a general, 32B reasoning model.

- Full RL training took about 28 days on 224 GPUs.

- Improvements in performance were very consistent across the run, in fact they were still going up when we had to stop it!

Where this leaves us

Post-training and RLHF are changing faster than maybe ever before.

- Language models are becoming “tool-use native” and are now about tools, harnesses (how you tell the model to use said tools), and much more than just weights

- RLHF and human preferences haven’t gone away, but are evolving far more slowly and out of the central gaze of the industry

- Building language models and doing research is changing rapidly with coding agents

This talk is ~lecture 1 of a larger course

- Introduction

- Key Related Works

- Training Overview

- Instruction Tuning

- Reward Models

- Reinforcement Learning

- Reasoning

- Direct Alignment

- Rejection Sampling

- What are Preferences

- Preference Data

- Synthetic Data & CAI

- Tool Use

- Over-optimization

- Regularization

- Evaluation

- Product & Character

- A. Definitions

- B. Style & Information

- C. Practical Issues

Full lecture slides coming to rlhfbook.com/course and YouTube @natolambert!

Thank you

Sorry I could not make it in person!

Contact: nathan@natolambert.com

Newsletter: interconnects.ai

rlhfbook.com